High-performance PowerScale workloads just gained a substantial new advantage. The all-flash F910 platform, in combination with the new OneFS 9.13 release, now supports next generation 400GbE networking, enabling faster data movement and smoother scalability. Whether powering AI pipelines or high-throughput analytics, 400GbE provides the headroom a high performance cluster needs to grow.

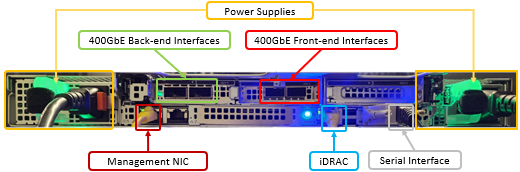

Native support for 400GbE front-end and/or backend networking on the F910, helps deliver next-generation bandwidth for performance-intensive workloads such as AI/ML pipelines, HPC, and high-throughput analytics.

This capability enables data movement and cluster expansion without architectural redesign, leveraging an existing NVIDIA SN5600 switch fabric if needed. The 400GbE implementation supports jumbo frames with RDMA over Converged Ethernet (RoCEv2) for low-latency data transfers, MTU sizes up to 9,000 bytes, and full compatibility with OneFS 9.13 or later releases using Dell-approved QSFP-DD optical modules. Clusters can scale up to 128 nodes while maintaining deterministic performance across Ethernet subnets.

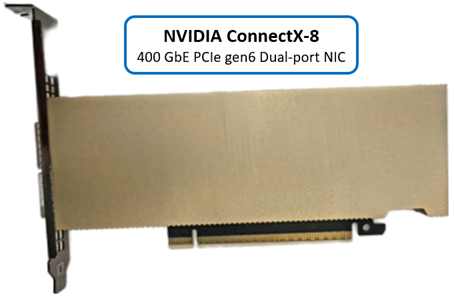

With OneFS 9.13 400Gb Ethernet is supported for the F910 platform using the NVIDIA CX-8 PCIe gen6 network interface controller (NIC).

Under the hood, the 400GbE LNI type and the aggregate type have been added to OneFS, where it is registered as an ‘MCE 0123’ NIC, and works just as any other front-end or back-end NIC does. Additionally, the same driver is used for the CX-8 as the earlier generation of the NVIDIA CX cards, albeit with the higher bandwidth, and reported as ‘400gige’. For example:

# isi network interface list LNN Name Status VLAN ID Owners Owner Type IP Addresses ---------------------------------------------------------------------------------------- 1 400gige-1 Up - groupnet0.subnet1.pool0 Static 10.20.30.51 1 400gige-2 Up - - - - 1 mgmt-1 Up - groupnet0.subnet0.pool0 - 172.1.1.51 1 mgmt-2 No Carrier - - - - 2 400gige-1 Up - groupnet0.subnet1.pool0 Static 10.20.30.52 2 40gige-2 Up - - - - 2 mgmt-1 Up - groupnet0.subnet0.pool0 - 172.1.1.52 2 mgmt-2 No Carrier - - - - 3 400gige-1 Up - groupnet0.subnet1.pool0 Static 10.20.30.53 3 400gige-2 Up - - - - 3 mgmt-1 Up - groupnet0.subnet0.pool0 - 172.1.1.53 3 mgmt-2 No Carrier - - - - ---------------------------------------------------------------------------------------- Total: 12

Note that, unlike the 200GbE ConnectX‑6 adapter—which supports dual‑personality operation for either Ethernet or InfiniBand on the F-series nodes—the new 400Gbps ConnectX‑8 NIC is currently Ethernet‑only for PowerScale.

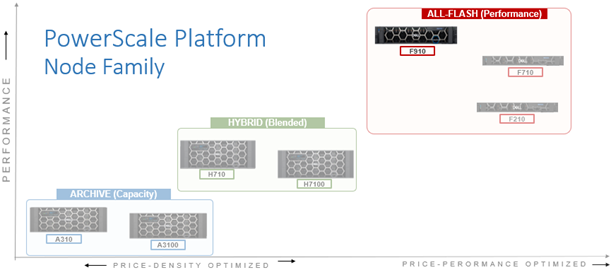

While 400GbE support in OneFS 9.13 is limited to the PowerScale F910 platform at launch, support for the F710 nodes and the PA110 accelerator is planned for an upcoming release.

This new NIC family is functionally similar to previous generations, with the primary difference being the increased line rate. It is designed for high‑performance workloads. The NVIDIA Spectrum‑4 SN5600 switch is required for the back‑end fabric, which currently limits cluster scalability to 128 nodes due to the absence of leaf‑spine support.

Support for Dell Ethernet switches will be introduced in a future release, but is not part of the current offering. Additionally, these new 400 GbE NICs cannot interoperate with 100GbE switches such as the Dell S5232, Z9100, or Z9264 series, as the technological differences between 100Gb and 400Gb Ethernet generations are too great to maintain compatibility. In OneFS, the adapters appear like any other NIC, identified simply as 400GbE interfaces.

Deploying 400GbE networking requires an F910 node pool running OneFS 9.13, along with compatible transceivers, cabling, and an NVIDIA Spectrum‑4 SN5600 switch.

Installation of 400GbE networking requires an F910 node pool running OneFS 9.13, plus transceivers, cables, and an NVIDIA Spectrum-4 5600 switch:

| Component | Requirement |

| Platform node | · F910 with NVIDIA CX-8 NICs (front-end and/or back-end)

· Cluster limited to a maximum of 128 nodes |

| OneFS version | · OneFS 9.13 or later |

| Node firmware | · NFP 13.2 with IDRAC 7.20.80.50 |

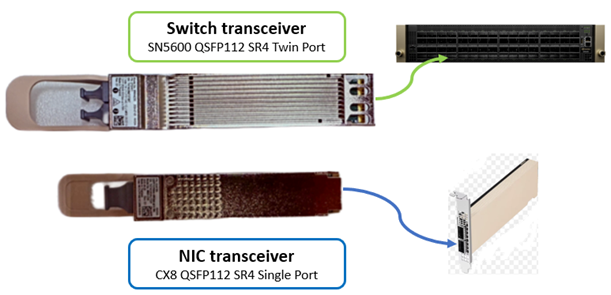

| NIC transceiver | · CX8 QSFP112 SR4 – Dell PN 024F8N (same as NVIDIA MMA1Z00-NS400) |

| Switch transceiver | · SN5600 QSFP112 SR4 Twin Port – Dell PN 070HK1 (same as NVIDIA MMA4Z00-NS) |

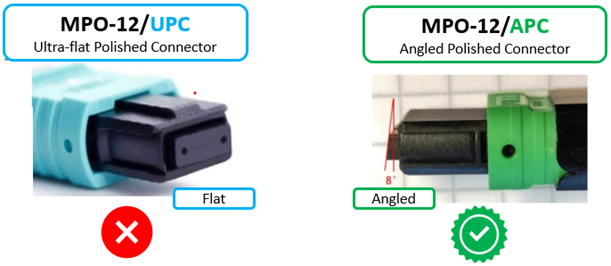

| Cable | · Multimode APC Cables with MPO-12/APC connectors (no AOC cables) |

| Switch | · NVIDIA Spectrum-4 SN5600 |

Additionally, the F910 nodes need to be running the latest node firmware package (NFP), as the iDRAC needs to support the card properly.

The current PowerScale 400GbE solution continues to use standard multimode optical modules, including the larger twin‑port module for the switch and the smaller QSFP‑112 SR4 module for the network adapter.

The Dell transceiver components are equivalent to the NVIDIA parts, as they are manufactured to the same specifications and differ only in part number.

With the 400 GbE generation, active optical cables (AOCs) are no longer supported. The increasing size and mechanical mass of the optical modules make AOCs unreliable and prone to damage at these speeds, so the architecture shifts fully to discrete optical transceivers and fiber cabling.

A significant consideration for new 400GbE PowerScale deployments is the transition to MPO‑12 APC (Angled Polish Connector) cabling. These connectors are identifiable by a green release sleeve, in contrast to the blue sleeve used on traditional UPC variants. For example:

APC cabling provides improved signal integrity; however, APC and UPC connectors are mechanically compatible.

Warning: Using a UPC cable in a 400GbE port will result in degraded signal quality or link instability. Installers should therefore verify that APC‑type MPO‑12 cables are used throughout a PowerScale cluster’s 400Gb Ethernet network.

As mentioned previously, the NIC used in the F910 nodes for 400GbE connectivity is NVIDIA’s ConnectX‑8 PCIe Gen6 adapter with dual QSFP‑112 ports in a standard half‑height, half‑length form factor.

Note that this NIC’s power consumption exceeds 50 Watts, which leads the PowerScale F910 nodes to operate their cooling fans at high speed to manage thermal output. So additional fan noise from these 400GbE F910 nodes, as compared to their 100GbE and 200GbE variants, is not indicative of an issue.

Also note that the onboard hardware cryptographic engine in the CX-8 NIC is enabled by default, although it is not currently utilized by OneFS. However, this may be a relevant consideration for certain regulatory compliance or export‑control scenarios.

Network switching for 400GbE uses the NVIDIA Spectrum-4 SN5600 switch. As mentioned previously, active optical cables (AOCs) are not available at this speed class. However, a 3‑meter direct-attach copper cable (DAC) is qualified for in‑rack connections. For front‑end connectivity, multimode optical transceivers remain the recommended option, consistent with prior generations.

Due to the thermal characteristics of 400GbE optics and NICs, systems should be expected to operate fans at maximum speed under typical workloads.

Regarding compatibility with lower speed Ethernet connectivity, the F910’s 400GbE NIC does support 200GbE optics and cabling, and will happily down‑negotiate to 200GbE. However, it is unable to down‑negotiate to 100GbE.