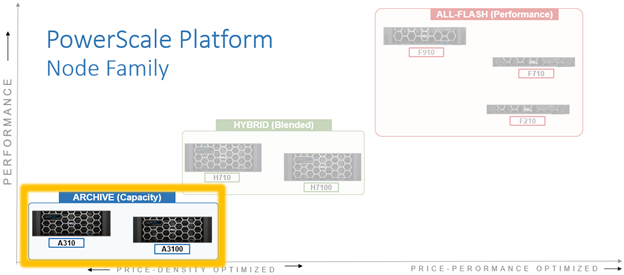

The last couple of articles generated several questions for the field around durability and resilience in the newly released PowerScale H710/0 and A310/0 nodes. In this article, we’ll take a deeper look at the OneFS journal and boot drive mirroring functionality in these H and A-series platforms.

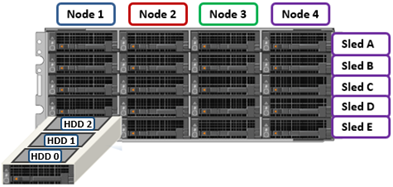

PowerScale chassis-based hardware, such as the new H710/7100 and A310/3100, stores the local filesystem journal and its mirror on persistent, battery-backed flash media within each node, with a 4RU PowerScale chassis housing four nodes. These nodes comprise a ‘compute node enclosure for the CPU, memory, and, and network cards, plus associated drive containers, or sleds, for each node.

The PowerScale H and A-series employ a node-pair architecture to dramatically increased system reliability, with each pair of nodes residing within a chassis power zone. This means that if a node’s PSU fails, the peer PSU supplies redundant power. It also drives a minimum cluster or node pool size of four nodes (one chassis) for the PowerScale H and A-series platforms, pairwise node population, and the need to scale the cluster two nodes at a time.

A node’s file system journal is protected against sudden power loss or hardware failure by OneFS’ journal vault functionality – otherwise known as ‘powerfail memory persistence’, or PMP. PMP automatically stores both the local journal and journal mirror on a separate flash drive across both nodes in a node pair:

This journal de-staging process is known as ‘vaulting’, during which the journal is protected by a dedicated battery in each node until it’s safely written from DRAM to SSD on both nodes in a node-pair. With PMP, constant power isn’t required to protect the journal in a degraded state since the journal is saved to M.2 flash and mirrored on the partner node.

So, the mirrored journal is comprised of both hardware and software components, including the following constituent parts:

Journal Hardware Components

- System DRAM

- 2 Vault Flash

- Battery Backup Unit (BBU)

- Non-Transparent Bridge (NTB) PCIe link to partner node

- Clean copy on disk

Journal Software Components

- Power-fail Memory Persistence (PMP)

- Mirrored Non-volatile Interface (MNVI)

- IFS Journal + Node State Block (NSB)

- Utilities

Asynchronous DRAM Refresh (ADR) preserves RAM contents when the operating system is not running. ADR is important for preserving RAM journal contents across reboots, and it does not require any software coordination to do so.

The journal vaulting functionality encompasses the hardware, firmware, and operating system, ensuring that the journal’s contents are preserved across power failure. The mechanism is similar to the software journal mirroring employed on the PowerScale F-series nodes, albeit using a PCIe-based NTB on the chassis based platforms, instead of using the back-end network as with the all-flash nodes.

On power failure, the PMP vaulting functionality is responsible for copying both the local journal and the local copy of the partner node’s journal to persistent flash. On restoration of power, PMP is responsible for restoring the contents of both journals from flash to RAM, and notifying the operating system.

A single dedicated 480GB NVMe flash device (nvd0) is attached via an M.2 slot on the motherboard of the H710/0 and A310/0 node’s compute module, residing under the battery backup unit (BBU) pack.

This is in contrast to the prior H and A-series chassis generations, which used a 128GB SATA M.2 device (/dev/ada0).

For example, the following CLI commands show the NVMe M.2 flash device in an A310 node:

# isi_hw_status | grep -i prod

Product: A310-4U-Single-96GB-1x1GE-2x25GE SFP+-60TB-1638GB SSD-SED

# nvmecontrol devlist

nvme0: Dell DN NVMe FIPS 7400 RI M.2 80 480GB

nvme0ns1 (447GB)

# gpart show | grep nvd0

=> 40 937703008 nvd0 GPT (447G)

# gpart show -l nvd0

=> 40 937703008 nvd0 GPT (447G)

40 2008 - free - (1.0M)

2048 41943040 1 isilon-pmp (20G)

41945088 895757960 - free - (427G)

In the above, the ‘isilon-pmp’ partition on the M.2 flash device is used by the file system journal for its vaulting activities.

The the NVMe M.2 device is housed on the node compute module’s riser card, and its firmware is managed by the OneFS DSP (drive support package) framework:

Note that the entire compute module must be removed in order for its M.2 flash to be serviced. If the M.2 flash does need to be replaced for any reason, it will be properly partitioned and the PMP structure will be created as part of arming the node for vaulting.

For clusters using data-at-rest encryption (DARE), an encrypted M.2 device is used, in conjunction with SED data drives, to provide full FIPS compliance.

The battery backup unit (BBU), when fully charged, provides enough power to vault both the local and partner journal during a power failure event:

A single battery is utilized in the BBU, which also supports back-to-back vaulting:

On the software side, the journal’s Power-fail Memory Persistence (PMP) provides an equivalent to the NVRAM controller‘s vault/restore capabilities to preserve Journal. The PMP partition on the M.2 flash drive provides an interface between the OS and firmware.

If a node boots and its primary journal is found to be invalid for whatever reason, it has three paths for recourse:

- Recover journal from its M.2 vault.

- Recover journal from its disk backup copy.

- Recover journal from its partner node’s mirrored copy.

The mirrored journal must guard against rolling back to a stale copy of the journal on reboot. This necessitates storing information about the state of journal copies outside the journal. As such, the Node State Block (NSB) is a persistent disk block that stores local and remote journal status (clean/dirty, valid/invalid, etc), as well as other non-journal information. NSB stores this node status outside the journal itself, and ensures that a node does not revert to a stale copy of the journal upon reboot.

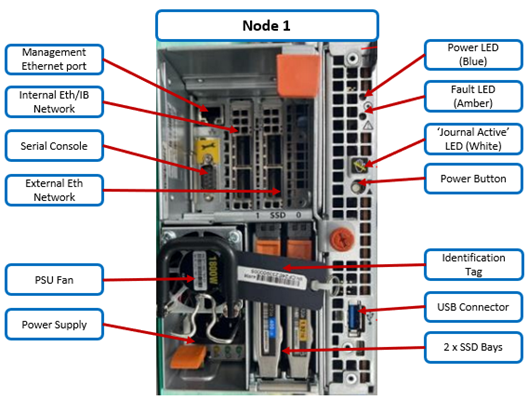

Here’s the detail of an individual node’s compute module:

Of particular note is the ‘journal active’ LED, which is displayed as a white ‘hand icon’:

When this white hand icon is illuminated, it indicates that the mirrored journal is actively vaulting, and it is not safe to remove the node!

There is also a blue ‘power’ LED, and a yellow ‘fault’ LED per node. If the blue LED is off, the node may still be in standby mode, in which case it may still be possible to pull debug information from the baseboard management controller (BMC).

The flashing yellow ‘fault’ LED has several state indication frequencies:

| Blink Speed |

Blink Frequency |

Indicator |

| Fast blink |

¼ Hz |

BIOS |

| Medium blink |

1 Hz |

Extended POST |

| Slow blink |

4 Hz |

Booting OS |

| Off |

Off |

OS running |

The mirrored non-volatile interface (MNVI) sits below /ifs and above RAM and the NTB, providing the abstraction of a reliable memory device to the /ifs journal. MNVI is responsible for synchronizing journal contents to peer node RAM, at the direction of the journal, and persisting writes to both systems while in a paired state. It upcalls into the journal on NTB link events, and notifies the journal of operation completion (mirror sync, block IO, etc). For example, when rebooting after a power outage, a node automatically loads the MNVI. It then establishes a link with its partner node and synchronizes its journal mirror across the PCIe Non-Transparent Bridge (NTB).

The Non-transparent Bridge (NTB) connects node pairs for OneFS Journal Replica:

The NTB Link itself is PCIe Gen3 X8, but there is no guarantee of NTB interoperability between different CPU generations. As such, the H710/0 and A310/0 use version 4 of the NTB driver, whereas the previous hardware generation uses NTBv3. This therefore means mixed-generation node pairs are unsupported.

Prior to mounting the /ifs file system, OneFS locates a valid copy of the journal from one of the following locations in order of preference:

| Order |

Journal Location |

Description |

| 1st |

Local disk |

A local copy that has been backed up to disk |

| 2nd |

Local vault |

A local copy of the journal restored from Vault into DRAM |

| 3rd |

Partner node |

A mirror copy of the journal from the partner node |

Assuming the node was shut down cleanly, it will boot using a local disk copy of the journal. The journal will be restored into DRAM and /ifs will mount. On the other hand, if the node suffered a power disruption, the journal will be restored into DRAM from the M.2 vault flash instead (the PMP copies the journal into the M.2 vault during a power failure).

In the event that OneFS is unable to locate a valid journal on either the hard drives or M.2 flash on a node, it will retrieve a mirrored copy of the journal from its partner node over the NTB. This is referred to as ‘Sync-back’.

Note: Sync-back state only occurs when attempting to mount /ifs.

On booting, if a node detects that its journal mirror on the partner node is out of sync (invalid), but the local journal is clean, /ifs will continue to mount. Subsequent writes are then copied to the remote journal in a process known as ‘sync-forward’.

Here’s a list of the primary journal states:

| Journal State |

Description |

| Sync-forward |

State in which writes to a journal are mirrored to the partner node. |

| Sync-back |

Journal is copied back from the partner node. Only occurs when attempting to mount /ifs. |

| Vaulting |

Storing a copy of the journal on M.2 flash during power failure. Vaulting is performed by PMP. |

During normal operation, writes to the primary journal and its mirror are managed by the MNVI device module, which writes through local memory to the partner node’s journal via the NTB. If the NTB is unavailable for an extended period, write operations can still be completed successfully on each node. For example, if the NTB link goes down in the middle of a write operation, the local journal write operation will complete. Read operations are processed from local memory.

Additional journal protection for PowerScale chassis-based platforms is provided by OneFS’ powerfail memory persistence (PMP) functionality, which guards against PCI bus errors that can cause the NTB to fail. If an error is detected, the CPU requests a ‘persistent reset’, during which the memory state is protected and node rebooted. When back up again, the journal is marked as intact and no further repair action is needed.

If a node loses power, the hardware notifies the BMC, initiating a memory persistent shutdown. At this point the node is running on battery power. The node is forced to reboot and load the PMP module, which preserves its local journal and its partner’s mirrored journal by storing them on M.2 flash. The PMP module then disables the battery and powers itself off.

Once power is back on and the node restarted, the PMP module first restores the journal before attempting to mount /ifs. Once done, the node then continues through system boot, validating the journal, setting sync-forward or sync-back states, etc.

The mirrored journal has the following CLI commands, although these should seldom be needed during normal cluster operation:

- isi_save_journal

- isi_checkjournal

- isi_testjournal

- isi_pmp

A node’s journal can be checked and confirmed healthy as follows:

# isi_testjournal

Checking One external batteries Health...

Batteries good

Checking PowerScale Journal integrity...

Mounted DRAM journal check: good

IFS is mounted.

During boot, isi_checkjournal and isi_testjournal will invoke isi_pmp. If the M.2 vault devices are unformatted, isi_pmp will format the devices.

On clean shutdown, isi_save_journal stashes a backup copy of the /dev/mnv0 device on the root filesystem, just as it does for the NVRAM journals in previous generations of hardware.

If a mirrored journal issue is suspected, or notified via cluster alerts, the best place to start troubleshooting is to take a look at the node’s log events. The journal logs to /var/log/messages, with entries tagged as ‘journal_mirror’.

Additionally, the following sysctls also provide information about the state of the journal mirror itself and the MNVI connection respectively:

# sysctl efs.journal.mirror_state

efs.journal.mirror_state:

{

Journal state: valid_protected

Journal Read-only: false

Need to inval mirror: false

Sync in progress: false

Sync error: 0

Sync noop in progress: false

Mirror work queued: false

Local state:

{

Clean: dirty

Valid: valid

}

Mirror state:

{

Connection: up

Validity: valid

}

}

And the MNVI connection state:

# sysctl hw.mnv0.state

hw.mnv0.state.iocnt: 0

hw.mnv0.state.cb_active: 0

hw.mnv0.state.io_gate: 0

hw.mnv0.state.state: 3

OneFS provides the following CELOG events for monitoring and alerting about mirrored journal issues:

| CELOG Event |

Description |

| HW_GEN6_NTB_LINK_OUTAGE |

Non-transparent bridge (NTP) PCIe link is unavailable |

| FILESYS_JOURNAL_VERIFY_FAILURE |

No valid journal copy found on node |

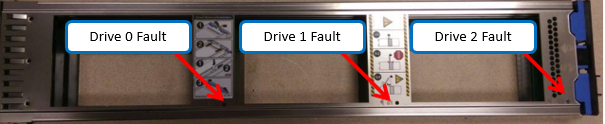

Another OneFS reliability optimization for the PowerScale chassis-based platforms is boot partition mirroring. OneFS boot and other OS partitions are stored on a node’s internal drives, and these partitions are mirrored (with the exception of crash dump partitions). The two mirrors protect against disk sled removal. Since each drive in a disk sled belongs to a separate disk pool, both elements of a mirror cannot live on the same sled.

With regard to the nodes’ internal drives, the boot disk reservation size has increased to 18GB on these new platforms from 8GB on the previous generation. Plus partition sizes have also been expanded on these new platforms in OneFS 9.11, as follows:

| Partition |

H71x and A31x |

H70x and A30x |

| hw |

1GB |

500MB |

| journal backup |

8197MB |

8GB |

| kerneldump |

5GB |

2GB |

| keystore |

64MB |

64MB |

| root |

4GB |

2GB |

| var |

4GB |

2GB |

| var-crash |

7GB |

3GB |

OneFS automatically rebalances these mirrors in anticipation of, and in response to, service events. Mirror rebalancing is triggered by drive events such as suspend, softfail and hard loss.

The ‘isi_mirrorctl verify’ and ‘gmirror status’ CLI commands can be used to confirm that boot mirroring is working as intended. For example, on an A310 node:

# gmirror status

Name Status Components

mirror/root0 COMPLETE da10p3 (ACTIVE)

da11p3 (ACTIVE)

mirror/mfg COMPLETE da15p7 (ACTIVE)

da12p6 (ACTIVE)

mirror/kernelsdump COMPLETE da15p6 (ACTIVE)

mirror/kerneldump COMPLETE da15p5 (ACTIVE)

mirror/var-crash COMPLETE da15p3 (ACTIVE)

da9p3 (ACTIVE)

mirror/journal-backup COMPLETE da14p5 (ACTIVE)

da12p5 (ACTIVE)

mirror/jbackup-peer COMPLETE da14p3 (ACTIVE)

da12p3 (ACTIVE)

mirror/keystore COMPLETE da12p7 (ACTIVE)

da10p10 (ACTIVE)

mirror/root1 COMPLETE da11p7 (ACTIVE)

da10p7 (ACTIVE)

mirror/var0 COMPLETE da11p6 (ACTIVE)

da10p6 (ACTIVE)

mirror/hw COMPLETE da10p9 (ACTIVE)

da7p5 (ACTIVE)

mirror/var1 COMPLETE da10p8 (ACTIVE)

da7p3 (ACTIVE)

Or:

# isi_mirrorctl verify

isi.sys.distmirror - INFO - Mirror root1: has an ACTIVE consumer of da11p5

isi.sys.distmirror - INFO - Mirror root1: has an ACTIVE consumer of da10p7

isi.sys.distmirror - INFO - Mirror var1: has an ACTIVE consumer of da13p5

isi.sys.distmirror - INFO - Mirror var1: has an ACTIVE consumer of da16p5

isi.sys.distmirror - INFO - Mirror journal-backup: has an ACTIVE consumer of da12p5

isi.sys.distmirror - INFO - Mirror journal-backup: has an ACTIVE consumer of da16p6

isi.sys.distmirror - INFO - Mirror jbackup-peer: has an ACTIVE consumer of da12p3

isi.sys.distmirror - INFO - Mirror jbackup-peer: has an ACTIVE consumer of da14p3

isi.sys.distmirror - INFO - Mirror var-crash: has an ACTIVE consumer of da10p6

isi.sys.distmirror - INFO - Mirror var-crash: has an ACTIVE consumer of da11p3

isi.sys.distmirror - INFO - Mirror kerneldump: has an ACTIVE consumer of da14p5

isi.sys.distmirror - INFO - Mirror root0: has an ACTIVE consumer of da10p3

isi.sys.distmirror - INFO - Mirror root0: has an ACTIVE consumer of da13p6

isi.sys.distmirror - INFO - Mirror var0: has an ACTIVE consumer of da13p3

isi.sys.distmirror - INFO - Mirror var0: has an ACTIVE consumer of da16p3

isi.sys.distmirror - INFO - Mirror kernelsdump: has an ACTIVE consumer of da14p6

isi.sys.distmirror - INFO - Mirror mfg: has an ACTIVE consumer of da13p9

isi.sys.distmirror - INFO - Mirror mfg: has an ACTIVE consumer of da16p7

isi.sys.distmirror - INFO - Mirror hw: has an ACTIVE consumer of da10p8

isi.sys.distmirror - INFO - Mirror hw: has an ACTIVE consumer of da13p8

isi.sys.distmirror - INFO - Mirror keystore: has an ACTIVE consumer of da13p10

isi.sys.distmirror - INFO - Mirror keystore: has an ACTIVE consumer of da16p8

The A310 node’s disks in the output above are laid out as follows:

# isi devices drive list

Lnn Location Device Lnum State Serial Sled

---------------------------------------------------------

128 Bay 1 /dev/da1 15 L3 X3X0A0JFTMSJ N/A

128 Bay 2 - N/A EMPTY N/A

128 Bay A0 /dev/da4 12 HEALTHY WQB0QKBR A

128 Bay A1 /dev/da3 13 HEALTHY WQB0QHV4 A

128 Bay A2 /dev/da2 14 HEALTHY WQB0QHN3 A

128 Bay B0 /dev/da7 9 HEALTHY WQB0QH4S B

128 Bay B1 /dev/da6 10 HEALTHY WQB0QGY3 B

128 Bay B2 /dev/da5 11 HEALTHY WQB0QJWE B

128 Bay C0 /dev/da10 6 HEALTHY WQB0QJ26 C

128 Bay C1 /dev/da9 7 HEALTHY WQB0QHYW C

128 Bay C2 /dev/da8 8 HEALTHY WQB0QK6Q C

128 Bay D0 /dev/da13 3 HEALTHY WQB0QJES D

128 Bay D1 /dev/da12 4 HEALTHY WQB0QHGG D

128 Bay D2 /dev/da11 5 HEALTHY WQB0QKH5 D

128 Bay E0 /dev/da16 0 HEALTHY WQB0QHFR E

128 Bay E1 /dev/da15 1 HEALTHY WQB0QJWD E

128 Bay E2 /dev/da14 2 HEALTHY WQB0QKGB E

---------------------------------------------------------

When it comes to SmartFailing nodes, there are a couple of additional caveats to be aware of with mirrored journal and the PowerScale chassis-based platforms:

- When SmartFailing one node in a pair, there is no compulsion to smartfail its partner node too.

- A node will still run indefinitely with its partner absent. However, this significantly increases the window of risk since there is no journal mirror to rely on (in addition to lack of redundant power supply, etc).

- If a single node in a pair is SmartFailed, the other node’s journal is still protected by the vault and powerfail memory persistence.