The previous blog article outlining the investigation and troubleshooting of OneFS deadlocks and hang-dumps generated several questions about OneFS logfile gathering. So it seemed like a germane topic to explore in an article.

The OneFS ‘isi_gather_info’ utility has long been a cluster staple for collecting and collating context and configuration that primarily aids support in the identification and resolution of bugs and issues. As such, it is arguably OneFS’ primary support tool and, in terms of actual functionality, it performs the following roles:

- Executes many commands, scripts, and utilities on cluster, and saves their results

- Gathers all these files into a single ‘gzipped’ package.

- Transmits the gather package back to Dell via several optional transport methods.

By default, a log gather tarfile is written to the /ifs/data/Isilon_Support/pkg/ directory. It can also be uploaded to Dell via the following means:

| Transport Mechanism | Description | TCP Port |

| ESRS | Uses Dell EMC Secure Remote Support (ESRS) for gather upload. | 443/8443 |

| FTP | Use FTP to upload completed gather. | 21 |

| HTTP | Use HTTP to upload gather. | 80/443 |

More specifically, the ‘isi_gather_info’ CLI command syntax includes the following options:

| Option | Description |

| –upload <boolean> | Enable gather upload. |

| –esrs <boolean> | Use ESRS for gather upload. |

| –gather-mode (incremental | full) | Type of gather: incremental, or full. |

| –http-insecure-upload <boolean> | Enable insecure HTTP upload on completed gather. |

| –http-upload-host <string> | HTTP Host to use for HTTP upload. |

| –http-upload-path <string> | Path on HTTP server to use for HTTP upload. |

| –http-upload-proxy <string> | Proxy server to use for HTTP upload. |

| –http-upload-proxy-port <integer> | Proxy server port to use for HTTP upload. |

| –clear-http-upload-proxy-port | Clear proxy server port to use for HTTP upload. |

| –ftp-upload <boolean> | Enable FTP upload on completed gather. |

| –ftp-upload-host <string> | FTP host to use for FTP upload. |

| –ftp-upload-path <string> | Path on FTP server to use for FTP upload. |

| –ftp-upload-proxy <string> | Proxy server to use for FTP upload. |

| –ftp-upload-proxy-port <integer> | Proxy server port to use for FTP upload. |

| –clear-ftp-upload-proxy-port | Clear proxy server port to use for FTP upload. |

| –ftp-upload-user <string> | FTP user to use for FTP upload. |

| –ftp-upload-ssl-cert <string> | Specifies the SSL certificate to use in FTPS connection. |

| –ftp-upload-insecure <boolean> | Whether to attempt a plain text FTP upload. |

| –ftp-upload-pass <string> | FTP user to use for FTP upload password. |

| –set-ftp-upload-pass | Specify the FTP upload password interactively. |

Once the gather arrives at Dell, it is automatically unpacked by a support process and analyzed using the ‘logviewer’ tool.

Under the hood, there are two principal components responsible for running a gather. These are:

| Component | Description |

| Overlord | · The manager process, triggered by the user, which oversees all the isi_gather_info tasks which are executed on a single node. |

| Minion | · The worker process, which runs a series of commands (specified by the overlord) on a specific node. |

The ‘isi_gather_info’ utility is primarily written in python, with its configuration under the purview of MCP, and RPC services provided by the isi_rpc_d daemon.

For example:

# isi_gather_info& # ps -auxw | grep -i gather root 91620 4.4 0.1 125024 79028 1 I+ 16:23 0:02.12 python /usr/bin/isi_gather_info (python3.8) root 91629 3.2 0.0 91020 39728 - S 16:23 0:01.89 isi_rpc_d: isi.gather.minion.minion.GatherManager (isi_rpc_d) root 93231 0.0 0.0 11148 2692 0 D+ 16:23 0:00.01 grep -i gather

The overlord uses isi_rdo (the OneFS remote command execution daemon) to start up the minion processes and informs them of the commands to be executed via an ephemeral XML file, typically stored at /ifs/.ifsvar/run/<uuid>-gather_commands.xml. The minion then spins up an executor and a command for each entry in the XML file.

The parallel process executor (the default one to use) acts as a pool, triggering commands to run in parallel until a specified number are running in parallel. The commands themselves take care of the running and processing of results, checking frequently to ensure the timeout threshold has not been passed.

The executor also keeps track of which commands are currently running, and how many are complete, and writes them to a file so that the overlord process can display useful information. Once complete, the executor returns the runtime information to the minion, which records the benchmark file. The executor will also safely shut itself down if the isi_gather_info lock file disappears, such as if the isi_gather_info process is killed.

During a gather the minion returns nothing to the overlord process, since the output of its work is written to disk.

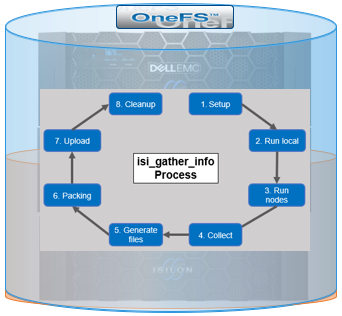

Architecturally, the ‘gather’ process comprises an eight phase workflow:

The details of each phase are as follows:

| Phase | Description |

| 1. Setup | Reads from the arguments passed in, as well as any config files on disk, and sets up the config dictionary, which will be used throughout the rest of the codebase. Most of the code for this step is contained in isilon/lib/python/gather/igi_config/configuration.py. This is also the step where the program is most likely to exit, if some config arguments end up being invalid |

| 2. Run local | Executes all the cluster commands, which are run on the same node that is starting the gather. All these commands run in parallel (up to the current parallelism value). This is typically the second longest running phase. |

| 3. Run nodes | Executes the node commands across all of the cluster’s nodes. This runs on each node, and while these commands run in parallel (up to the current parallelism value), they do not run in parallel with the local step. |

| 4. Collect | Ensures all of the results end up on the overlord node (the node that started gather). If gather is using /ifs, it is very fast, but if it’s not, it needs to SCP all the node results to a single node. |

| 5. Generate Extra Files | Generates nodes_info and package_info.xml. These are two files that are present in every single gather, and tell us some important metadata about the cluster |

| 6. Packing | Packs (tars and gzips) all the results. This is typically the longest running phase, often by an order of magnitude |

| 7. Upload | Transports the tarfile package to its specified destination. Depending on the geographic location this phase might also be a lengthy duration. |

| 8. Cleanup | Cleanups any intermediary files that were created on cluster. This phase will run even if gather fails, or is interrupted. |

Since the isi_gather_info tool is primarily intended for troubleshooting clusters with issues, it runs as root (or compadmin in compliance mode), as it needs to be able to execute under degraded conditions (eg. without GMP, during upgrade, and under cluster splits, etc). Given these atypical requirements, isi_gather_info is built as a stand-alone utility, rather than using the platform API for data collection.

The time it takes to complete a gather is typically determined by cluster configuration, rather than size. For example, a gather on a small cluster with a large number of NFS shares will take significantly longer than on large cluster with a similar NFS configuration. Incremental gathers are not recommended, since the base that’s required to check against in the log store may be deleted. By default, gathers only persist for two weeks in the log processor.

On completion of a gather, a tar’d and zipped logset is generated and placed under the cluster’s /ifs/data/IsilonSupport/pkg directory by default. A standard gather tarfile unpacks to the following top-level structure:

# du -sh * 536M IsilonLogs-powerscale-f900-cl1-20220816-172533-3983fba9-3fdc-446c-8d4b-21392d2c425d.tgz 320K benchmark 24K celog_events.xml 24K command_line 128K complete 449M local 24K local.log 24K nodes_info 24K overlord.log 83M powerscale-f900-cl1-1 24K powerscale-f900-cl1-1.log 119M powerscale-f900-cl1-2 24K powerscale-f900-cl1-2.log 134M powerscale-f900-cl1-3 24K powerscale-f900-cl1-3.log

In this case, for a three node F900 cluster, the compressed tarfile is 536 MB in size. The bulk of the data, which is primarily CLI command output, logs and sysctl output, is contained in the ‘local’ and individual node directories (powerscale-f900-cl1-*). Each node directory contains a tarfile, varlog.tar, containing all the pertinent logfiles for that node.

In the root directory of the tarfile file includes the following:

| Item | Description |

| benchmark | § Runtimes for all commands executed by the gather. |

| celog_events.xml | · Info about the customer, including, name, phone, email, etc.

· Contains significant about the cluster and individual nodes, including: § Cluster/Node names § Node Serial numbers § Configuration ID § OneFS version info § Events |

| command_line | · Syntax of gather commands run. |

| complete | § Lists of complete commands run across the cluster and on individual nodes |

| local | · See below. |

| nodes_info | · Contains general information about the nodes, including the node ID, the IP address, the node name, and the logical node number |

| overlord.log | § Gather execution and issue log. |

| package_info.xml | § Cluster version details, GUID, S/N, and customer info (name, phone, email, etc). |

Notable contents of the ‘local’ directory (all the cluster-wide commands that are executed on the node running the gather) include:

| Local Contents Item | Description |

| isi_alerts_history

|

· This file seems to contain a list of all alerts that have ever occurred on the cluster

· Event Id, which consists of the number of the initiating node and the event number · Times that the alert was issued and was resolved · Severity · Logical Node Number of the node(s) to which the alert applies · The message contained in the alert |

| isi_job_list | · Contains information about job engine processes

· Includes Job names, enabled status, priority policy, and descriptions |

| isi_job_schedule | · A schedule of when job engine processes run

· Includes job name, the schedule for a job, and the next time that a run of the job will occur |

| isi_license | · The current license status of all of the modules |

| isi_network_interfaces | § State and configuration of all the cluster’s network interfaces. |

| isi_nfs_exports | § Configuration detail for all the cluster’s NFS exports. |

| isi_services | § Listing of all the OneFS services and whether they are enabled or disabled. More detailed configuration for each service is contained in separate files. Ie. For SnapshotIQ:

o snapshot_list o snapshot_schedule o snapshot_settings o snapshot_usage o writable_snapshot_list |

| isi_smb | § Detailed configuration info for all the cluster’s NFS exports. |

| isi_stat | § Overall status of the cluster, including networks, drives, etc. |

| isi_statistics | § CPU, protocol, and disk IO stats. |

Contents of the directory for the ‘node’ directory include:

| Node Contents Item | Description |

| df | · output of the df command |

| du | · Output of the du command

· Unfortunately it runs ‘du -h’ which reports capacity in ‘human readable’ for, but make it more complex to sort. |

| isi_alerts | · Contains a list of outstanding alerts on the node |

| ps and ps_full | lists of all running process at the time that isi_gather_info was executed. |

As the isi_gather_info command runs, status is provided via the interactive CLI session:

# isi_gather_info Configuring COMPLETE running local commands IN PROGRESS \ Progress of local [######################################################## ] 147/152 files written \ Some active commands are: ifsvar_modules_jobengine_cp, isi_statistics_heat, ifsv ar_modules

Once the gather has completed, the location of the tarfile on the cluster itself is reported as follows:

# isi_gather_info Configuring COMPLETE running local commands COMPLETE running node commands COMPLETE collecting files COMPLETE generating package_info.xml COMPLETE tarring gather COMPLETE uploading gather COMPLETE

Path to the tar-ed gather is:

/ifs/data/Isilon_Support/pkg/IsilonLogs-h5001-20220830-122839-23af1154-779c-41e9-b0bd-d10a026c9214.tgz

If the gather upload services are unavailable, errors will be displayed on the console, per below:

… uploading gather FAILED ESRS failed - ESRS has not been provisioned FTP failed - pycurl error: (28, 'Failed to connect to ftp.isilon.com port 21 after 81630 ms: Operation timed out')